Transitioning Ethereum to proof-of-stake changed how transactions ultimately land on-chain. Like clockwork, every 12 seconds a validator has the opportunity to add a block to the beacon chain. But this process isn’t necessarily straightforward. And several actors have already exploited flaws in the system. In this webinar, Chris Meisl, CTO & Co-Founder of Blocknative, examines the storage architecture, transactional framework, and consensus mechanisms that form the foundation of Ethereum's decentralized infrastructure.

A full transcript is available below and the slides can be found here.

Recap Video

Transcript

Setting the Stage: The Consensus Layer and Execution Layer

In this webinar, we will be talking about slots and what goes on inside of a slot. In order to get there, we need to talk about consensus, and what transaction finality means. Based on the experience we have at Blocknative, operating both builders and a relay, we can actually see what sort of dynamics happen at these levels. So we'll take a little journey that will eventually get us to the details of the slot, and more importantly, how slots actually play out in the real world.

To accommodate a wide variety of experience levels, I'm going to start with some very general material and then get into the details. So, as a quick reminder, Ethereum is now essentially two chains. You have the Beacon Chain, which represents the consensus layer and is what all the validators run. And then, you have the execution layer, which is running good old Geth in order to get blocks.

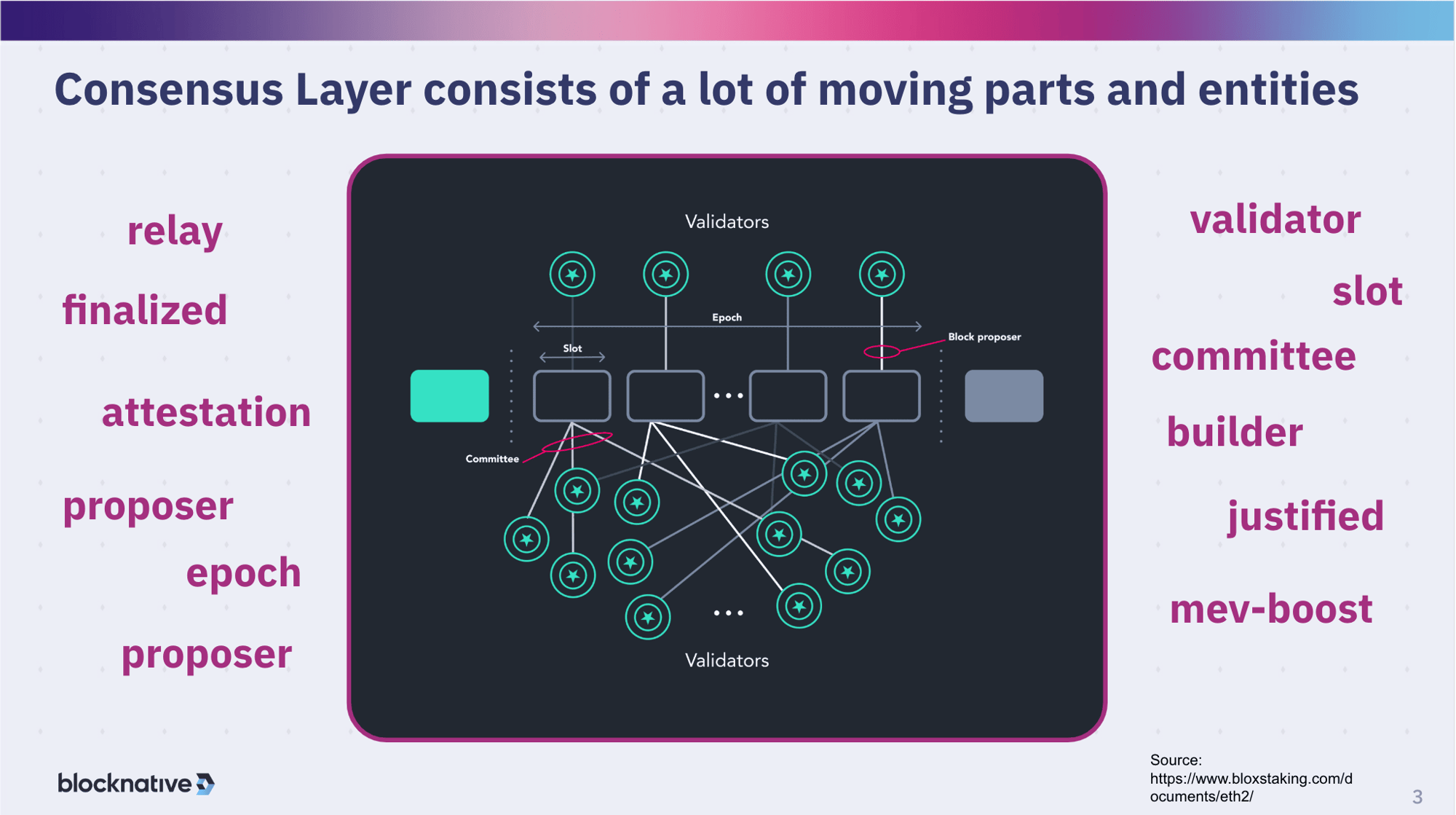

We're going to be focusing mostly on the consensus layer here, because it's all about what the validators are doing to get consensus on slots, but there is some overlap. Specifically, the execution layer is where the value is, whether from the public mempool or through MEV, that the validators receive by validating the block.

The consensus layer has a lot of moving parts: Validators -- a subset of which are proposers and a subset of which are part of a committee at any given time -- and there are epics and slots. Additionally, we have relays, builders, and MEV-Boost. So we have a lot of moving parts that we will touch on as well as discuss how they work together. So let's break this down and dive right in.

Transaction Finality

The goal of the consensus layer is to get transaction finality. The definition of finality means that the transaction can no longer be changed. And of course, on blockchains, transactions come in groups called blocks. Finalized blocks can't be changed. This is actually a key difference from proof-of-work (PoW).

.jpg?width=1280&height=720&name=PUBLIC%20-%20%20Anatomy%20of%20a%20slot%20-%20Blocknative%20(1).jpg)

Under PoW, there was there was no strong sense of finality. Instead, the more blocks built on top of the block containing your on-chain transaction, the more secure you felt the transaction wouldn't reverted or change due to a reorg or fork. Initial confirmation was weak, becoming stronger over time as the likelihood diminished of uncles, a sort of mini-reorg, or more significant multi-block reorgs that changed the chain-state. Because of this, a lot of applications would wait for five blocks or 10 blocks before they actually reported confirmation to their users to avoid the possibility of reorgs - which was happening all the time with uncles, mini reorgs, and more significant ones that were multi block reorgs that would change the state. Confirmed was a weak confirmation that got stronger over time.

Additionally, there was no real sense of time under PoW, just block number, because the time it took to create a new block was highly variable. Though the overall average time was 12, 13, or 14 seconds, at an individual level, block creation could range anywhere from one to 50 seconds.

.jpg?width=1280&height=720&name=PUBLIC%20-%20%20Anatomy%20of%20a%20slot%20-%20Blocknative%20(3).jpg)

Under Proof-of-Stake (PoS) there was an introduction of the idea of a safe head or a justified block. If you go farther back, you have a finalized block which is a block that cannot be reverted. By this I mean that while this block technically could be reverted, the cost of that reversion would be so extreme (1/3 of the total stake in Ethereum or many billions of dollars at this point and growing) that for all practical purposes, you can say that, that reversion won't happen. So there is a much stronger sense of an actual state where you can say that this won't change and you can build additional business value and logic around the fact that it won't revert in any way.

.jpg?width=1280&height=720&name=PUBLIC%20-%20%20Anatomy%20of%20a%20slot%20-%20Blocknative%20(2).jpg)

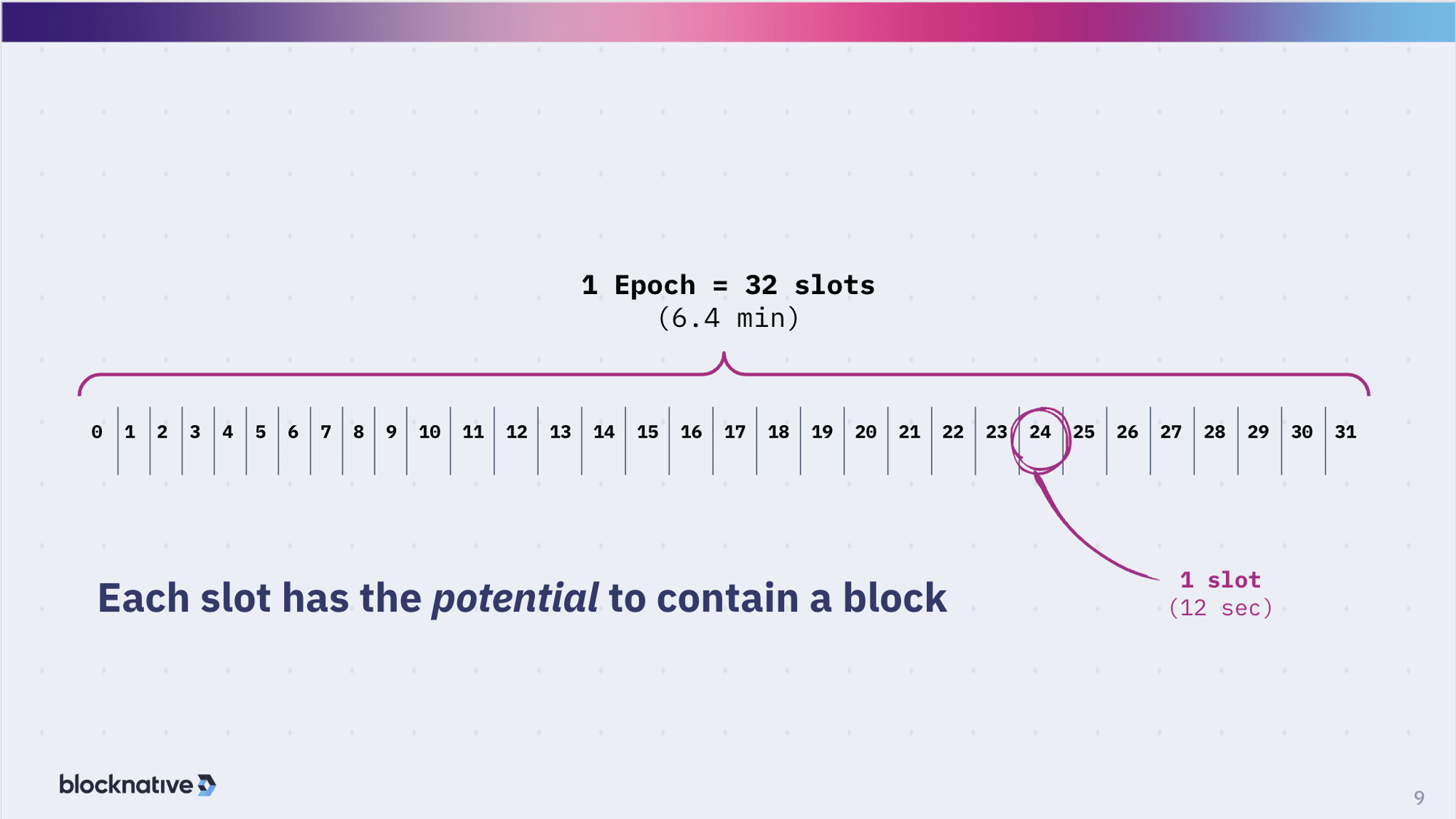

Of course, PoS also introduces the concept of regular time intervals. Now there is an actual clock: A slot occurs every 12 seconds; every 6.4 minutes, you have a new epoch. There is an actual clock. So today with proof-of-stake in Ethereum, you can very confidently say that a block has been finalized and is not going to change - and you can base a lot of your business logic on that - after two epochs, which is about 12-13 minutes.

Understanding Epochs and Slots

Let's get into some of these terms: What's an "epoch" and what's a "slot"? A slot occurs every 12 seconds. This is not an approximate or average but an actual 12 seconds. You can actually compute the slot number from the time and you can compute the time from the slot number or the range. A slot is an opportunity for there to be a block in the chain. It doesn't mean that every slot contains a block but it can. Most slots contain a block but it's not required. So there is this concept of a missed slot which is basically empty. Then you roll up 32 slots into an epoch, which is 32 x 12 seconds = 6.4 minutes. Those are the basic units that are used by the system to determine the level of finality of transactions.

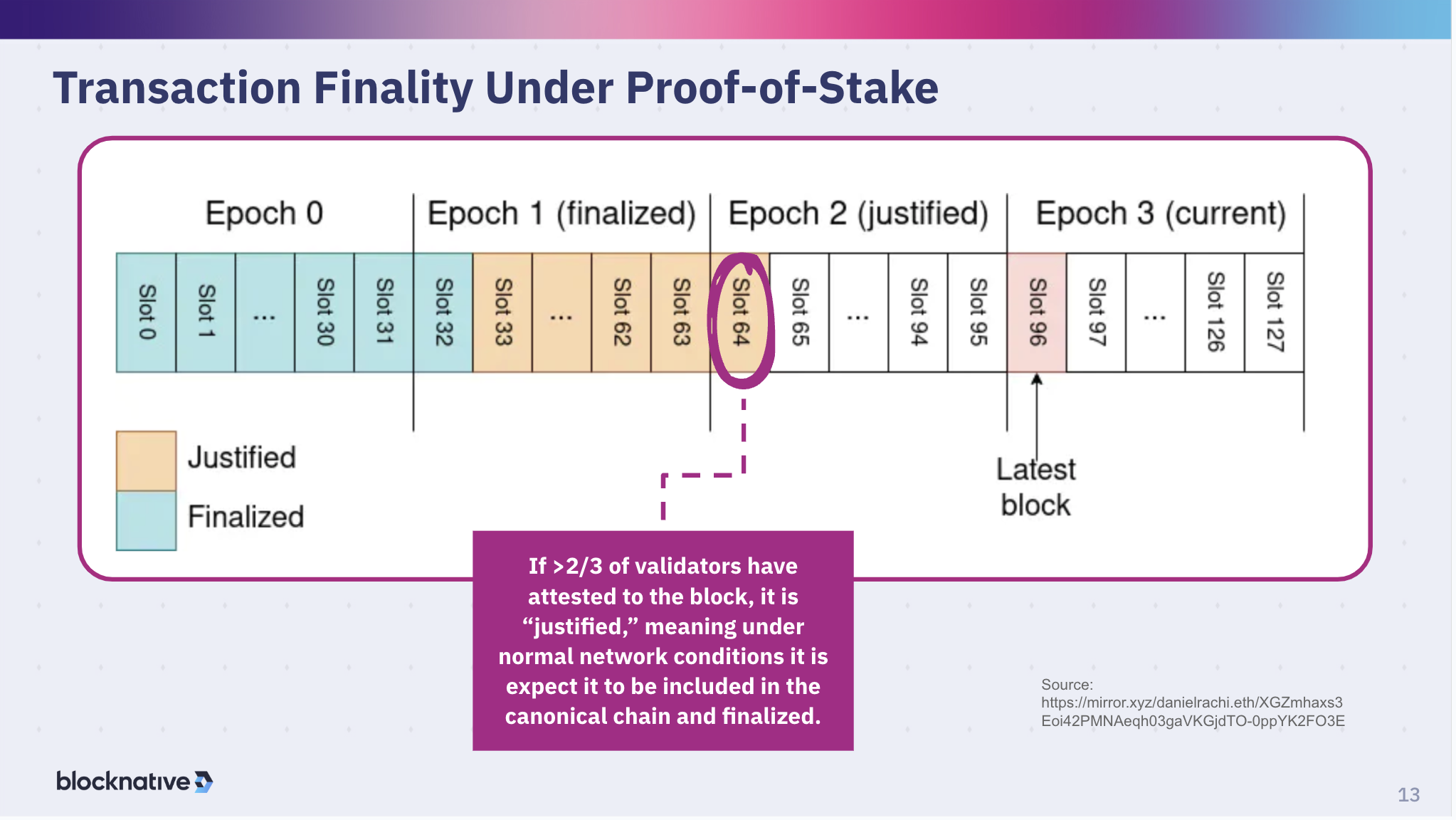

Under proof-of-stake, you have these epochs containing 32 slots. As you move forward in time, you have validators that are proposing a block - but we will skip this for now - and more importantly you have validators attesting to the block that was proposed for a particular slot. As those attestations come in and reach a certain level, the block is considered "justified". If it is far enough back in time - two epochs ago - you get to a finalized state. That is the point at which the amount of Eth required to overcome that is so high that you can consider it extremely safe at that point. So let's actually walk through this timeline.

.jpg?width=1280&height=720&name=PUBLIC%20-%20%20Anatomy%20of%20a%20slot%20-%20Blocknative%20(4).jpg)

The first slot at the beginning of every epoch is special and is called a "checkpoint" slot. This checkpoint slot is used to say that the previous one's state is locked in. The first slot is saying that the prior epoch is now finalized. Slot 64, in this case, is considered justified. Epoch one, here, is listed as finalized, but it's only the first slot that's finalized in there but that is the way we use the nomenclature in there.

.jpg?width=1280&height=720&name=PUBLIC%20-%20%20Anatomy%20of%20a%20slot%20-%20Blocknative%20(5).jpg)

At that point, validators then vote for these pairs of checkpoints, the previous n - 1 epoch (in this case, "n" is epoch three), epoch 2, and the checkpoint for n - 2 epoch. As long as two thirds of the validators are honest and put their stake against that, those slots are upgraded to the justified and finalized states. And then that makes the previous epoch, now epoch zero, fully finalized and non-revertible.

Now the previous epoch gets into a justified state, the precursor to being finalized, which many applications consider a strong enough indicator of finality, in other words that that nothing's going to happen. It's pretty hard to to undo the justified state, even though it's not not as strong as a finalized state.

.jpg?width=1280&height=720&name=PUBLIC%20-%20%20Anatomy%20of%20a%20slot%20-%20Blocknative%20(6).jpg)

And of course, the finalized state for the n-2 block at that point. So the goal of this is to get to this finalized state.

Now, what's actually going on, technically, is that there are two components to the consensus algorithm: Casper and the LMD ghost. Casper, the friendly financial finality gadget, is the overlay that makes decisions based on the validator weight. Don't think of it in terms of the number of validators but rather the validator weight because each validator represents a certain amount of stake. You take the aggregate stake of all of the validators that are attesting to a particular slot and a particular epoch and once that achieves the two thirds rate you can be in a safe space. There are detailed rules inside of Casper which add some weights in different configurations in order to make this thing very efficient. But it is this Casper overlay that really drives the understanding, or the setting, of whether it is justified or finalized - so it is very tied to the attestation.

The other part of the of the consensus algorithm is LMD ghost, which is primarily a fork choice rule (though it does a few other things). LMD ghost is designed to keep the timeline moving forward so that blocks keep getting built. This is the trade off in proof-of-stake between liveness, in which you keep building blocks-- it doesn't stop, the chain doesn't halt even if those blocks are not justified or finalized. This is what ghost does. Those blocks may not have the attestations you need in order to ensure everything is operating nicely and smoothly. But you are continuing to build blocks. Casper is adding the safety on top of the liveness.

Liveness and safety are countervailing forces. So you have to balance those out. So Casper is trying to give you the safety - the colors in the image. And then ghost is giving you the the steady live progression of blocks - which is the slots in the image.

.jpg?width=1280&height=720&name=PUBLIC%20-%20%20Anatomy%20of%20a%20slot%20-%20Blocknative%20(7).jpg)

This slide serves as a reminder that if you wanted to revert a finalized block then you would have to burn a third of the total staked ETH of the entire network.

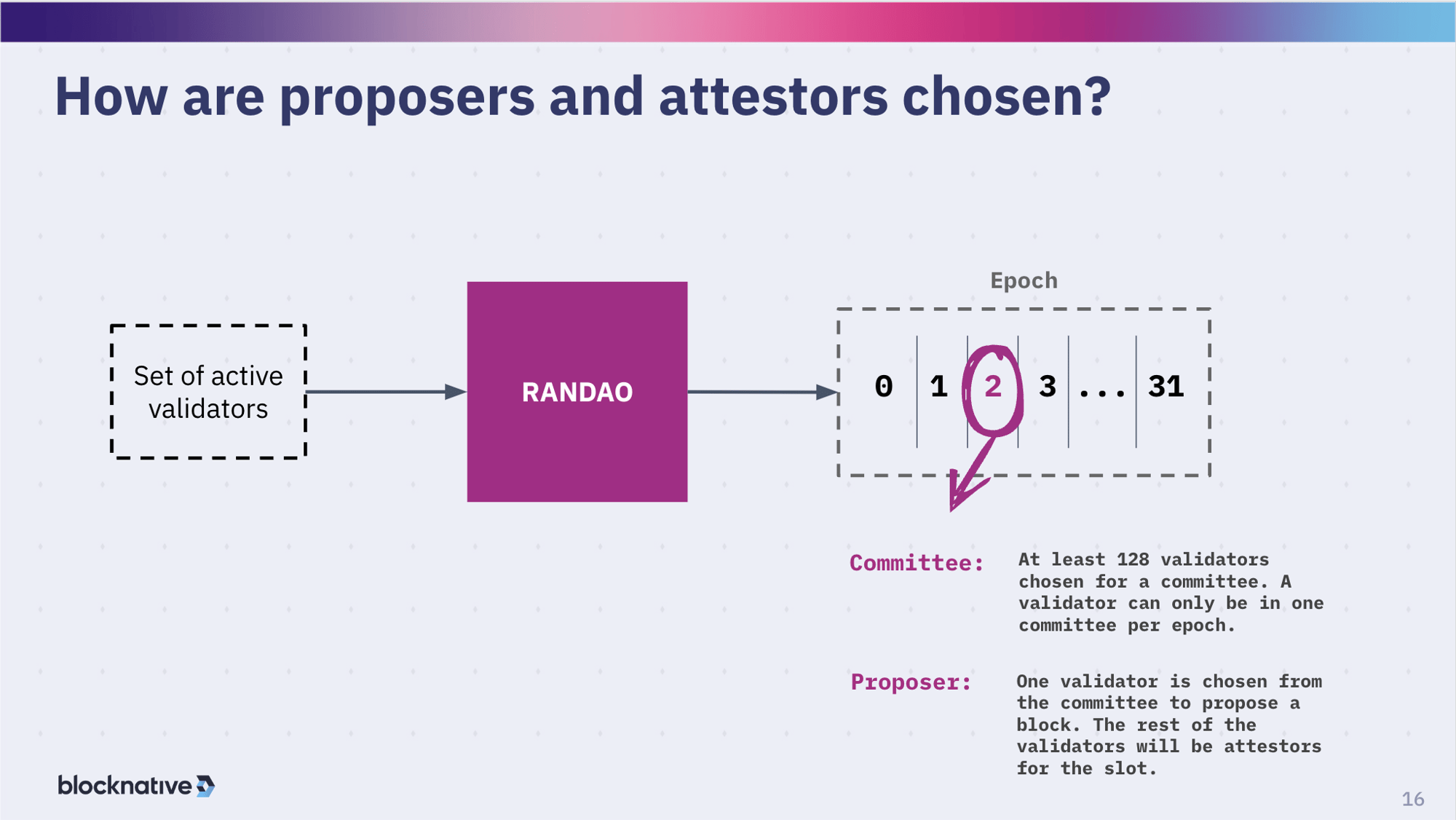

Quick reminder: not all validators are voting for or attesting to every slot, every epoch. Committees are formed using a randao function across the network. For every slot, one validator is anointed to be the proposer within that committee. That validator proposes the block that is going to be associated with a slot. As the proposer, that validator gets the reward, assuming that the block is valid and behaves properly so all the attestations come through okay. The 127 other validators in the committee make sure that all the slots in the epoch have all the right attestations. I bring this up because this changes with single slot finality because you approach differently than these small committees. But these small committees are used primarily to make the system efficient and fast.

So what happens inside a slot?

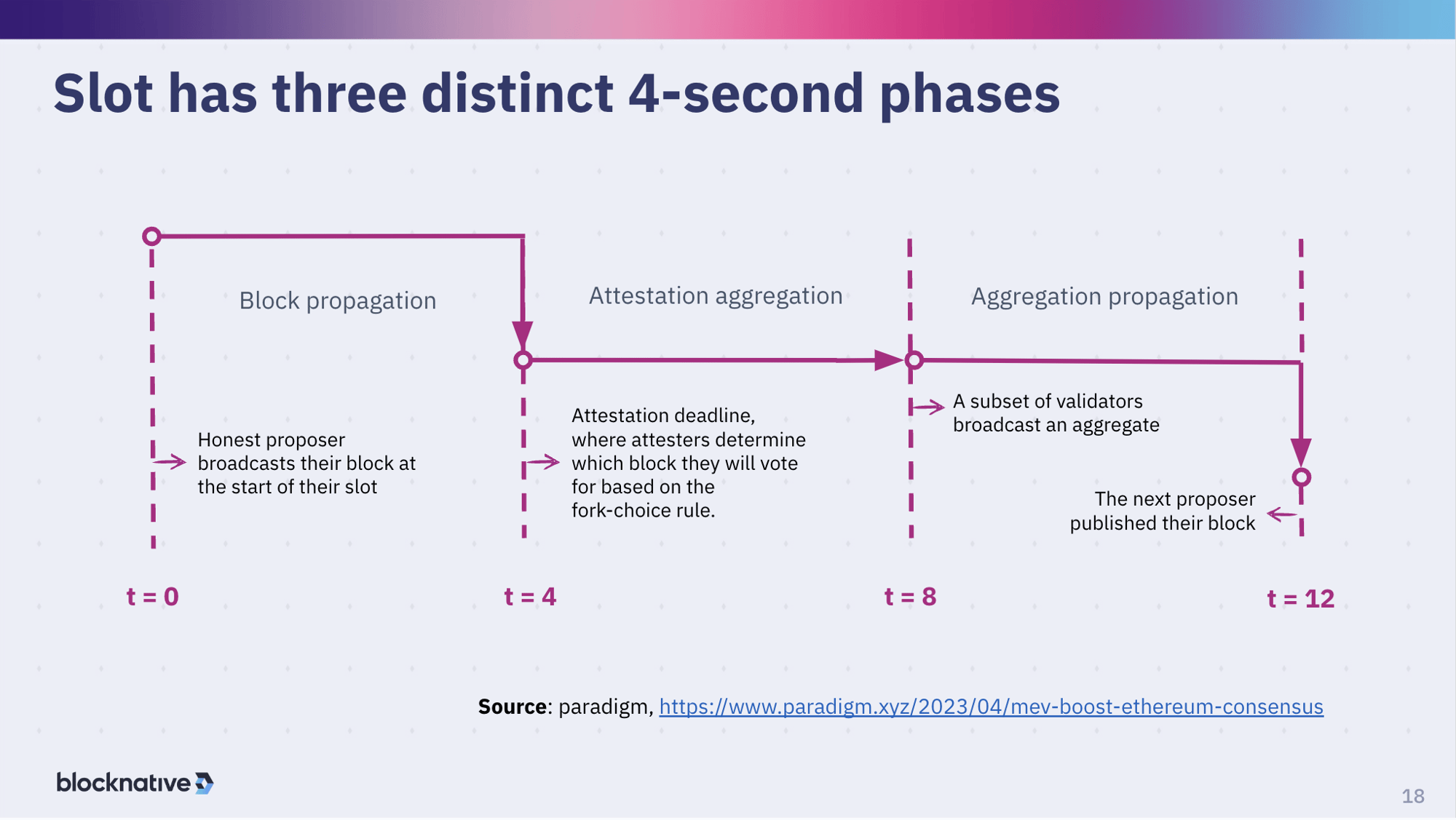

We just discussed the background needed to discuss what's actually happening inside of the slot. A slot has three phases that are each approximately four seconds long. The first part is where a block is propagated in within the slot. Then the next part is where attestations start to happen. Attestations are on the heavy side: there are a lot of messages, a lot of bytes moving around since this is when the voting is going on. Then a subset of the committee of the validators involved in this particular epoch, and all the slots within that epoch, are then going to aggregate the attestation.

Attestations use BLS signatures. BLS signatures are a bit computationally intensive but they have a really cool feature aggregation feature where you can aggregate and combine a bunch of the signatures. So the verification of the group verifies all the ones that are inside of it. The last, third phase, of the 12-seconds of a slot is where all the aggregation happens. Those aggregated attestations are attached to the next proposed block. This is the design concept. This is what's supposed to happen. Let's talk about what actually happens.

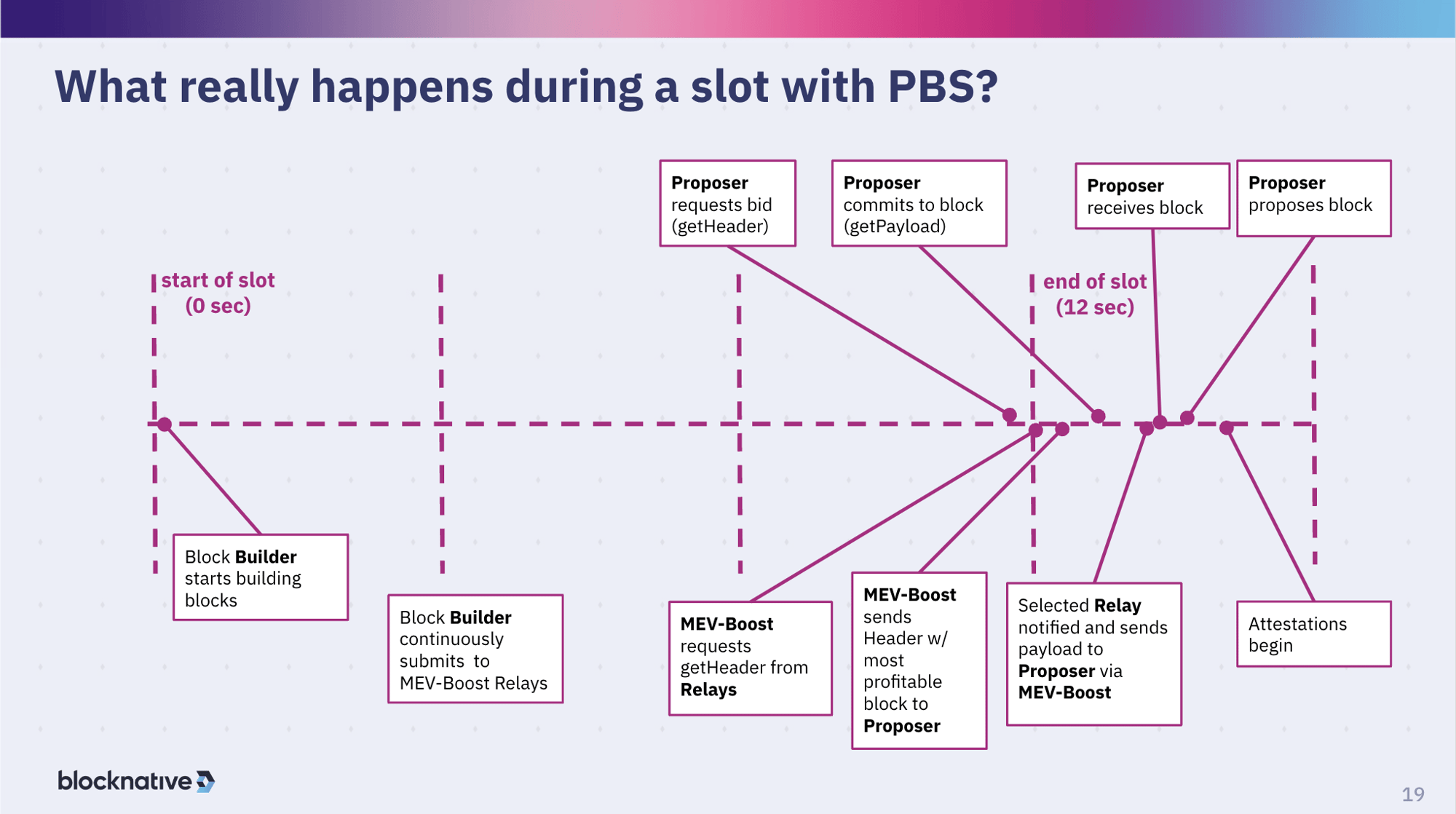

This is a very much a simplification but it gets the guts of what what's actually happening every 12 seconds on Ethereum today. At the beginning of the slot, under PBS or Proposer-Builder-Separation, you have builders that are separate from the proposers. The builder is building the block. The builder can be the validator, but doesn't necessarily have to be. Typically blocks are being built with a lot of MEV (maximal extractable value) in them. That makes the blocks much more valuable by hundreds of percent making them preferred blocks.

These builders (of MEV blocks) have relationships with with searchers, which are entities looking for opportunities to find MEV. The MEV could be atomic MEV, when you detect a transaction in the public mempool and then back run, front run, or sandwich it to extract value. There is also a lot of MEV between a centralized exchange and a decentralized exchange. These searchers are working with price oracles or Binance, things like that. There's also long-tail MEV which involves buying now, sell a lot later because maybe you're watching social media very carefully and understand that something is going to go up in value. Or maybe you have some kind of way of detecting when something is going to become available, you take advantage of that.

So there are many different kinds of MEV. So builders will work with searchers that construct bundles, essentially private transactions that are combinations of one or more transactions, including maybe some public transactions. The searcher sends those bundles to a builder. The builder then plays this game of trying to find the optimal combination to make the most valuable block and that's the block that they then submit to a relay.

Starting at the beginning of a slot the builder starts building and start submitting to relays. Throughout this period they are submitting to the relay.

Next, the proposer, the validator anointed to propose a block for the current slot, is going to request for the highest block bid from the relays that it is connected to. In this case, the validator is using MEV-Boost, which is a proxy that talks to whichever relays the validator is connected to and acts as a simple auction mechanism. MEV-Boost asks for bids from all of the all of the relays, this is called a get-header request, and then this returns the highest bid back to the proposer. Then the proposal will commit to that block by calling get-payload.

Get-payload is an action by the proposer where they are signing their commitment to the header in order to get the payload. This ensures they don't get to see the payload until they've committed to actually proposing that payload because the header contains the block hash, which is deterministic in terms of the contents of the block. If you change the contents of the block, the hash would change. Therefore by committing to that hash, you've committed to the block. This is a way for the builders, the relays to deliver blocks with high value to the proposer without the proposer, in theory, being able to mess with the block -- for example, to change some of the transactions so that more value goes to the proposer.

Once the proposer commits, the relay will release the block to the proposer. The proposer then receives that block and proposes it, pushing it out onto the network propagating it out onto the network. Meanwhile, the relay also proposes it to help things along. In fact, there's been a recent change where any relay that knew about this block - remember builders might send to multiple relays - will also propagate it. The idea is to get block propagation going as fast as possible. There's a very good reason for this that will get into a little later.

Once that proposed block starts to propagate, all the other validators that are part of the committee for the current epoch can start to do their attestation. Then attestations get aggregated, etc. etc. This is the sequence and I don't know if it is clear in this diagram but there is something very different in this diagram and the diagram before. In this diagram (slide 18) you'll notice the timeline goes from t=0 to t=12 seconds and this one (slide 19) goes from t=0 to t = 16 seconds. You will see that almost all of the interesting activity is actually happening after the end of the slot. It is for the block of the slot represented by the first twelve seconds. That's the first four seconds in this block propagation. This is super important because typically block propagation doesn't occur at t = 0. It occurs much closer to t = 4. That shrinks the timeline for all of the attestations to come in and plays a key factor in missed blocks. If you wait too long as a proposer, your block may not propagate fast enough to the other members of the committee and therefore they might say they don't have a block. So the current head is the previous block creating a missed slot, which is a very possible scenario driven by the fact that value in blocks is almost always going up, incentivizing the proposer to wait as long as possible. But the longer they wait, the more risk getting absolutely nothing because they can miss the slot by waiting too long.

What does the relay see?

This is where it gets interesting. A relay is in a trusted position between the builders and the validators. In other words, what the validator gets is what the relay sees-- the aggregate of all the relays that a particular validators is looking at.

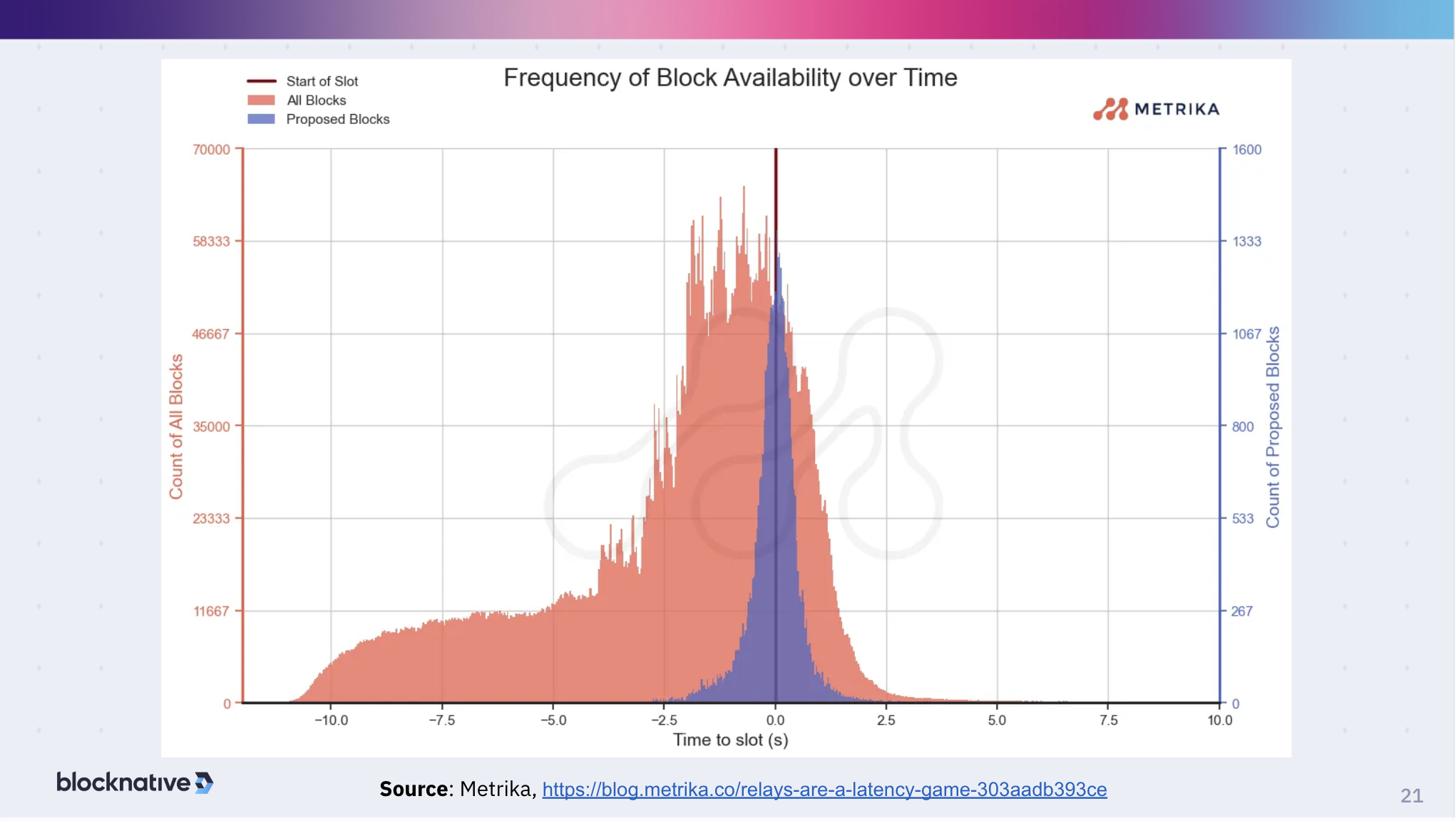

This chart by Metrika is an analysis across all of the major relays showing some of the behavior that we see. You'll see that at the start of the slot, blocks are being built. This is relays reporting because this is all done in the open that blocks are available - not the contents of the blocks just the headers and the bids.

You can see that block building starts pretty early in the slot. Remember, this is the previous slot for the slot that is now going to have that block in it - that's why the graph shows negative times. As you get close towards the end of the slot, then block building really kicks because that's when all the value is getting really good. Relays therefore make a huge number of blocks available. Then the actual block proposals are all happening near that boundary. A bunch of proposals happen before the end of the slot. Before t = 0 there are safe proposals that ensure propagation happens and so you don't miss out on the slot. There are also a lot of actors that wait until very late, 2.5 seconds in, who are taking their chances with propagation delays. So that's the general distribution of block availability over time relative to that slot boundary. You can call it the t = 0 or t=12.

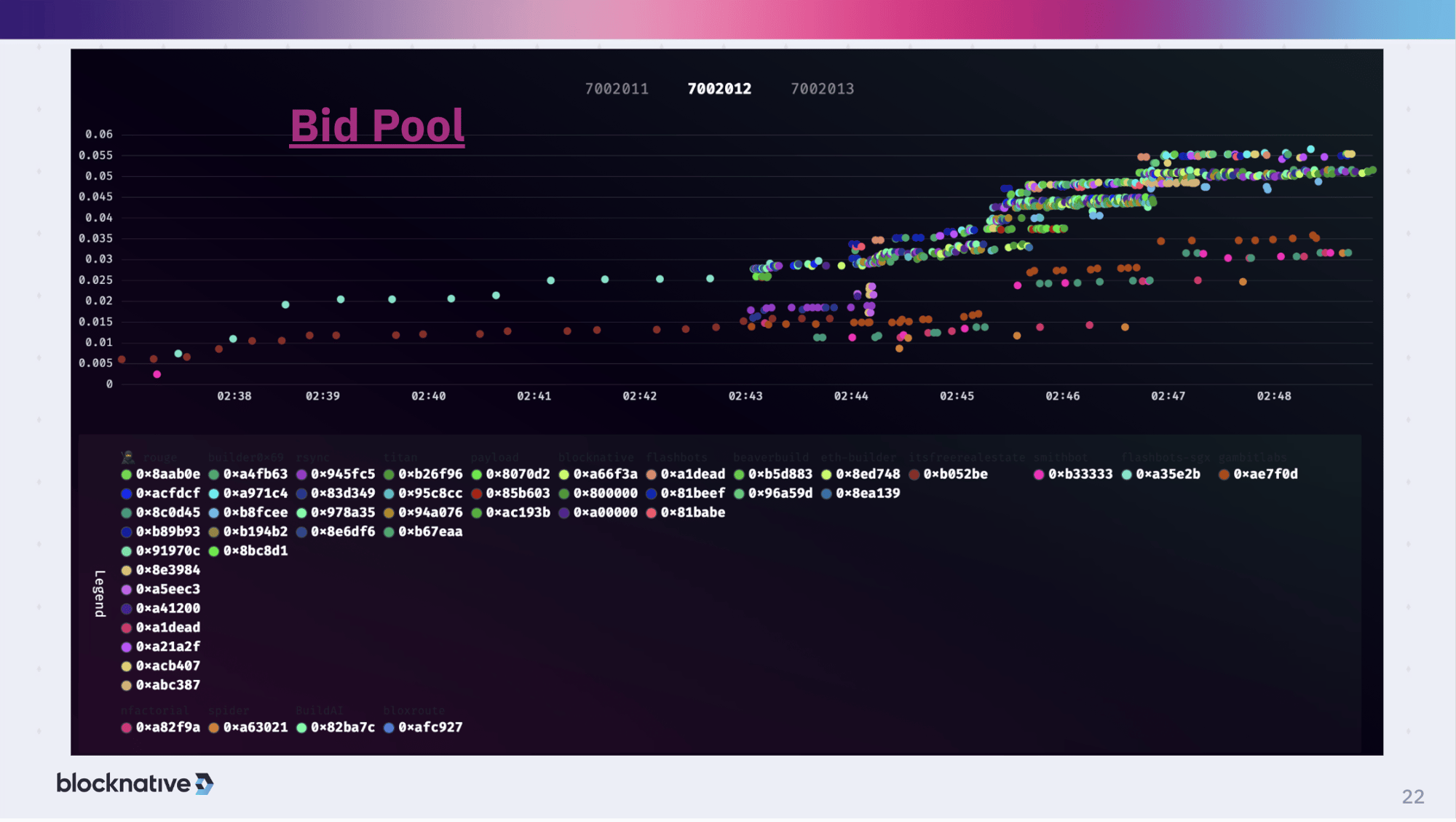

Bid pool

What the relay actually sees for a given slot is that at the beginning you have these bids coming in. The color represents the builder and the height on the vertical scale represents the value of the current bid. There are some builders that are constantly building and sending it to relays. But about halfway through the slot, things get interesting when a lot more builders start to kick in. This is the typical behavior we see operating the Blocknative Relay. It's also behavior we use in our own builder, which is don't bother building until halfway into the slot, because it's just a waste of time. Early blocks won't be accepted.

As you get towards the end of the slot building activity really kicks into high gear. You see some striation indicating incremental improvements to a block. And you see some big MEV opportunity shows up. Then you'll jump up to the next level, and the next level, and so on. Not all builders see everything from a researcher, different builders have access to different searchers. So that's why some of the builders, like the orange one here, didn't have access to some interesting opportunities.

Now, you might think that the very highest dot, (the teal or turquoise colored one here) might win. But that's not necessarily true, because it depends on when the proposer actually says it's ready to propose and requests the block, which might happen earlier. So the winning block is not necessarily the most valuable block but rather the most valuable block at the time the proser was ready to sign the block.

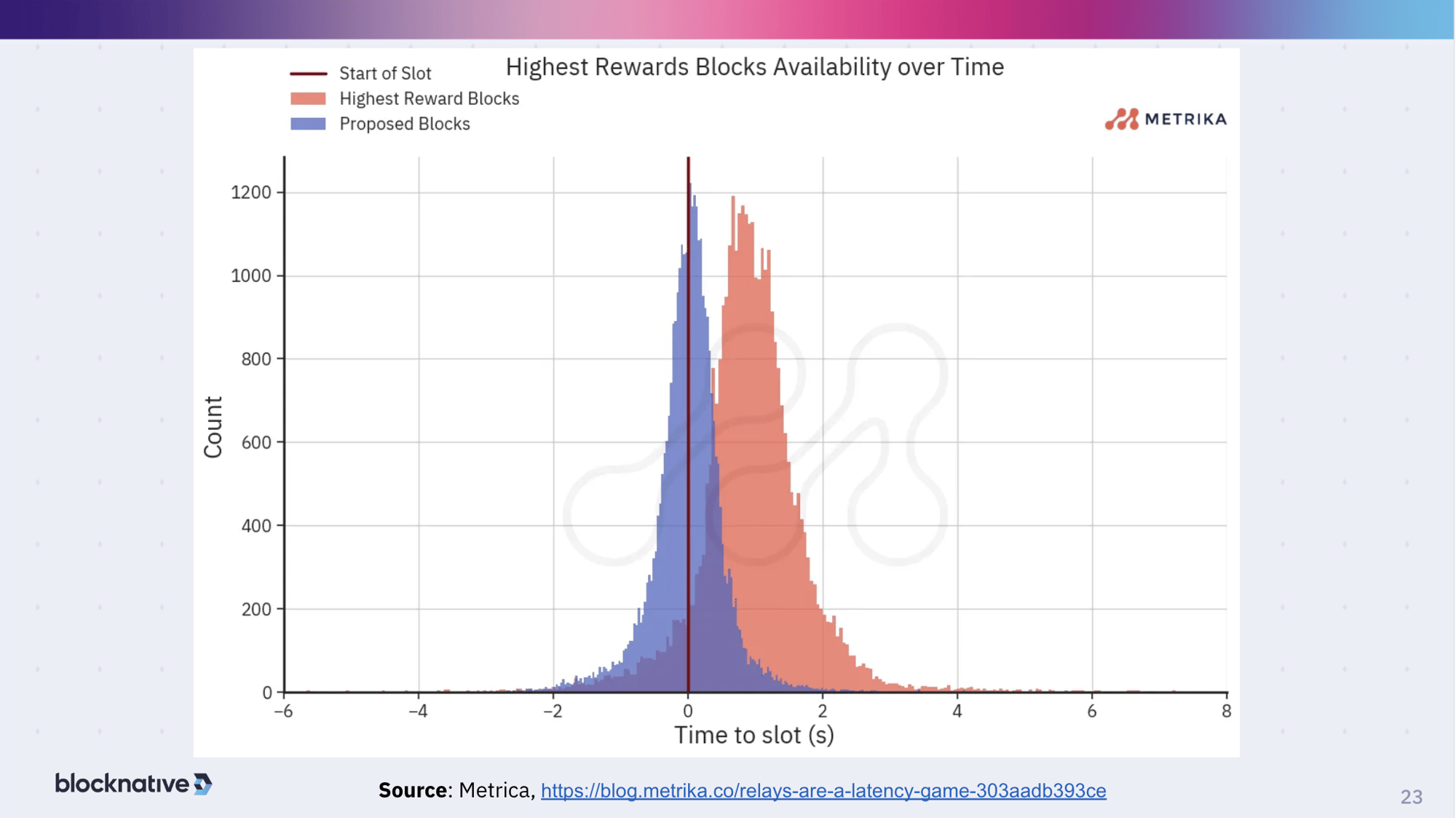

That's shown in this graph as well by Metrika: the distribution of the highest block rewards is offset from that to the right. If proposers wait longer, in order to get the higher bids, they would be more profitable purely from a bid perspective. Now, of course, they're taking a chance on latency, the risk their block won't propagate in time for attestation to complete sufficiently in order to fill the slot. This is the game that a validator has to do-- bird in hand vs. two in the bush: do you want to take value x or value x plus whatever but at a higher risk. This is why some validators will operate with some fancy networking stacks in order to push the boundaries to get more into this highest block reward regime rather than being near the beginning of the slot.

Before I continue with the deck I want to go live with some of the things we talked about. This is what just happened - this free real estate is this builder. This builder - Flashbots - is doing its thing. Then when things kick in you can see Titan builder, Flashbots, Blocknative builder, rsyn builder. Then things got super competitive and then somewhere around here probably is where the winning block is chosen. And it continued to go up - which is the Metrika chart that I showed earlier - where there is more value but it is too risky to go for that balue because of the latency concerns you have and actually getting that proposed. So you can see that these jumps usually mean that a transaction occurred in the public mempool and searchers jumped on it and so builders then picked it up and they leapt into the new state. And you can see that most builders jumped up because searchers tend to send their bundles to most of the major builders. But sometimes you see that very few builders leap up and that is usually due to a price oracle change and so it is more of a CEX-DEX arbitrage opportunity. Fewer builders might engage in that as it is a two-legged transaction and there is more risk and more capital involved. This is the domain of searcher-builders which are builders that do their own searching because they can take advantage of that better.

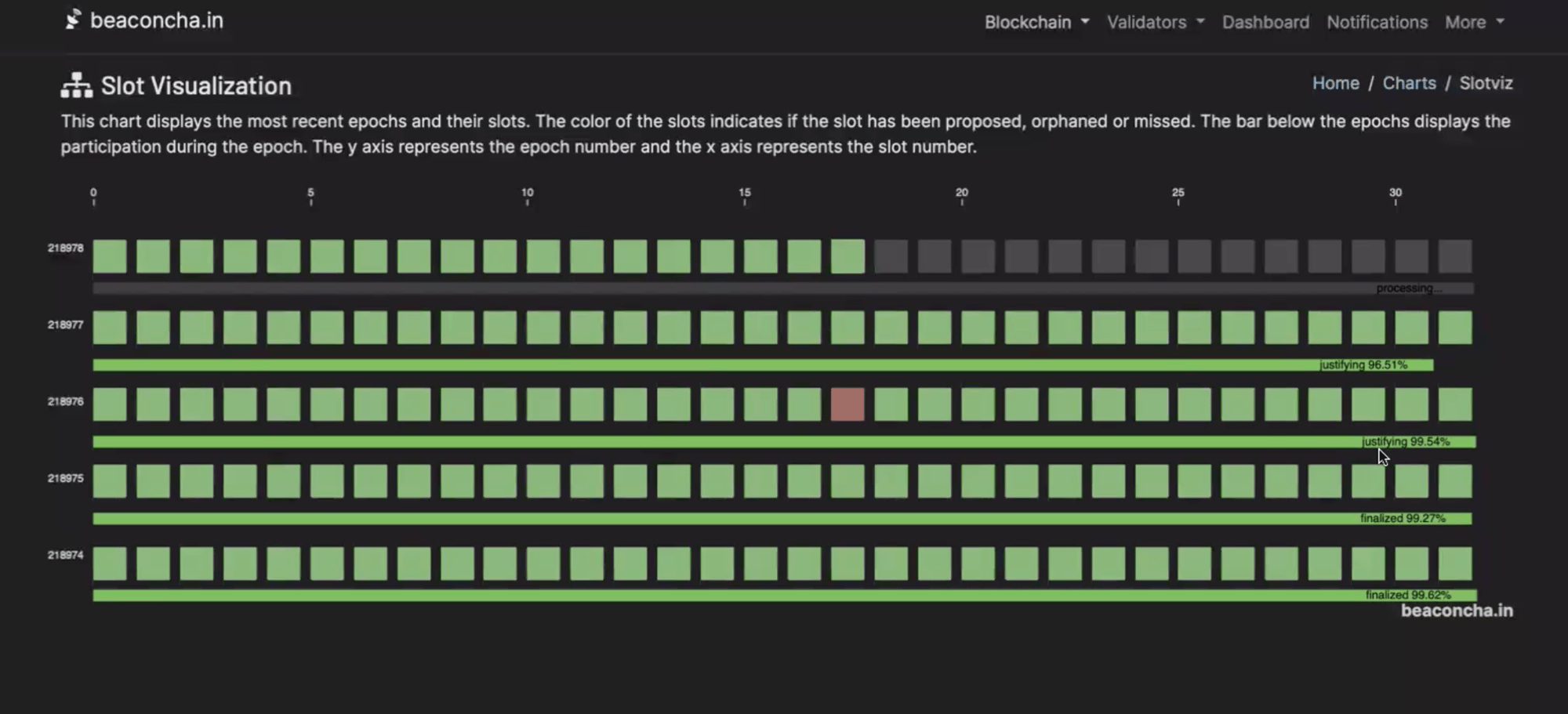

Slot visualization

Beacon Chain has a slot visualization that helps show you what I was talking about earlier. Each of these is an epoch. At the top is the current epoch that is being populated. Each square represents each slot coming in. The green blocks indicate it was a successful proposal, which means there was good attestation for it. Red indicates a missed slot. If it's green all the way across, it was an epoch with all slots that were that were filled. In the current epoch, you can see the slots that are justified. If you go back n - 2 against the most filled block, the complete epoch, those are now finalized.

You can see the percentages for the weight of justification on the bottom of each epoch. In this example, the percentages are showing a strong weight. But interestingly, this could be a very low number and it still works. That's why is there a separation of Casper and ghost. Ghost makes sure that this progression continues happening no matter what. And Casper is trying to get it to be high percentages so that you can really trust the finalization.

One of the things that we saw during the Shapella upgrade, the most recent hard fork, were very low percentages here, especially on Goerli testnet. This was expected, because not everyone had upgraded their nodes to support that. On Mainnet, it started to look like low percentages but then it quickly got up to the 60-70% range. Now, obviously, we want to get to at least 66%, that two thirds. At that point, people started to breathe a sigh of relief that most people had basically upgraded their node and we were we were in good shape.

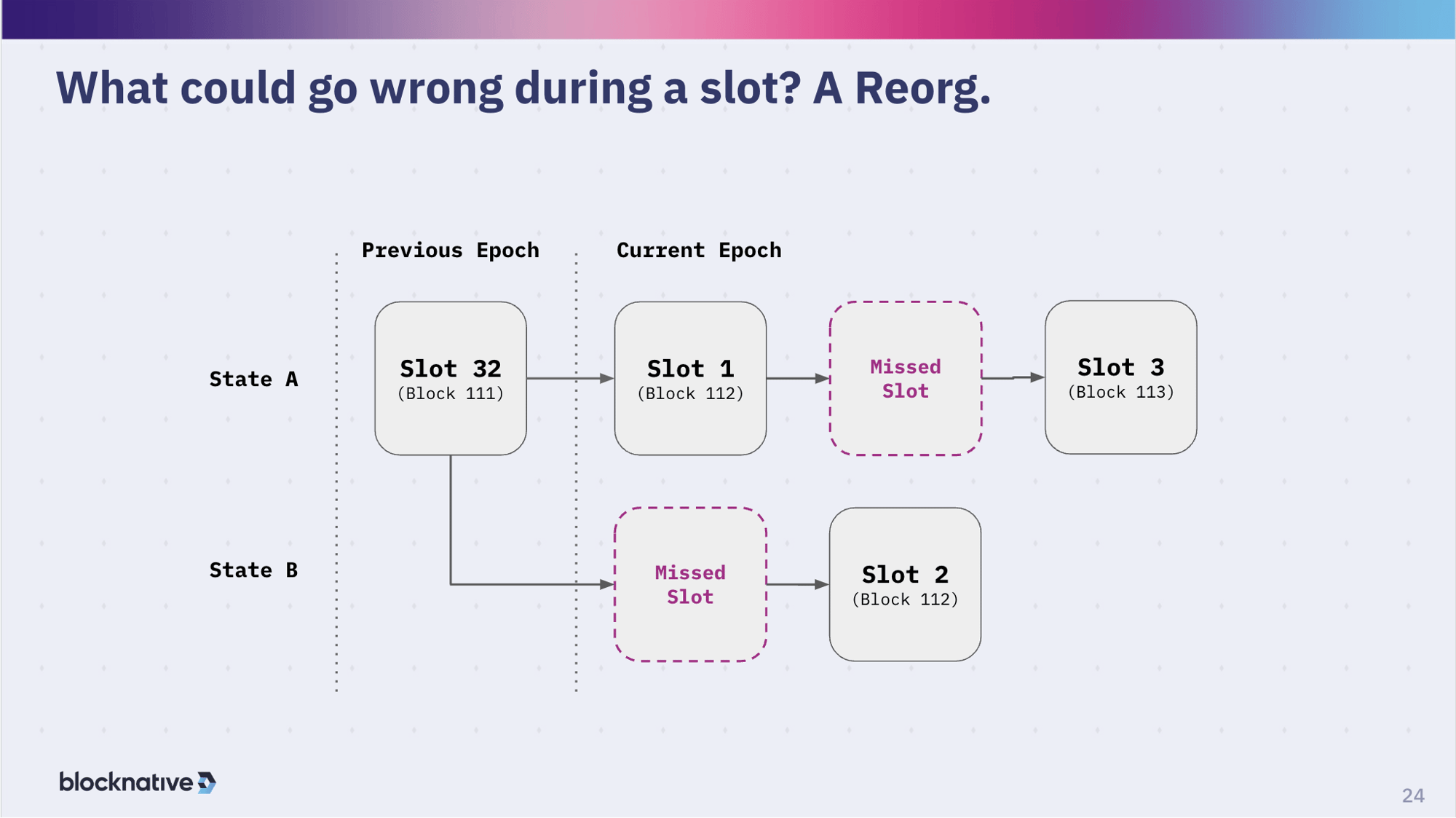

What could go wrong during a slot? A reorg.

Let's talk a bit about missed slots again. So you have a slot that comes in, you fill it with a block, so that is in the previous epoch. Then the next slot comes in, you have a block gets proposed and things start chugging along. But not all the validators see it - not everyone else see it. In this case, the validating proposer for slot two doesn't see the block. It didn't get to them.

So when it's their turn to do a proposal, from their perspective they think that the previous slot was missed and that the current head of the chain is block 111 which is in slot 32. So they will propose block 112 here, which of course belongs to slot two. Then maybe the proposer for the next slot, slot three, they did see slot one. But they haven't seen slot two yet.

This is an example where this validator had some kind of latency issue. Not only did they not get this new information, they got the older information, but their propagation is slow as well. They receiving slowly and sending slowly so the next validator is not going to see their slot. Not only are they receiving slow but they are sending slow which is typical. Then, the next validator won't see their slot so there is a missed slot there. So now you have two missed slots. At this point, the upper chain, State A, is going to be the heavier chain, if you will, with two slot attestations rather than the one in State B. In this case, the fork choice rule will build off of State A.

This is a classic example of why you might have missed slots. Remember, at the epoch boundary there's extra work going on. So there might be a little extra latency to factor in creating more likelihood that that you can have this missed slot situation when you are at the epoch boundaries.

This was a problem that we encountered as a relay operator more than it ought to have occurred. Our team worked pretty closely with the Prysm and the Lighthouse teams to try to figure out what was going on in order to improve the processing there so that the epoch boundary was handled more efficiently and therefore reduce the number of missed slots. Now they're much lower again.

So, this is your classic kind of reorg scenario, which is organic to how these algorithms work. But we try to tune the algorithms in order to minimize it. And we also try to tune our latency setups. Especially with relays, we run pretty highly optimized networks that are distributed around the world, with a lot of replication - not all validators necessarily do this, though some of the larger validator pools might, in order to try to minimize this. With every one of these missed slots, you might be losing a half an ETH or an ETH. It could be a lot of value.

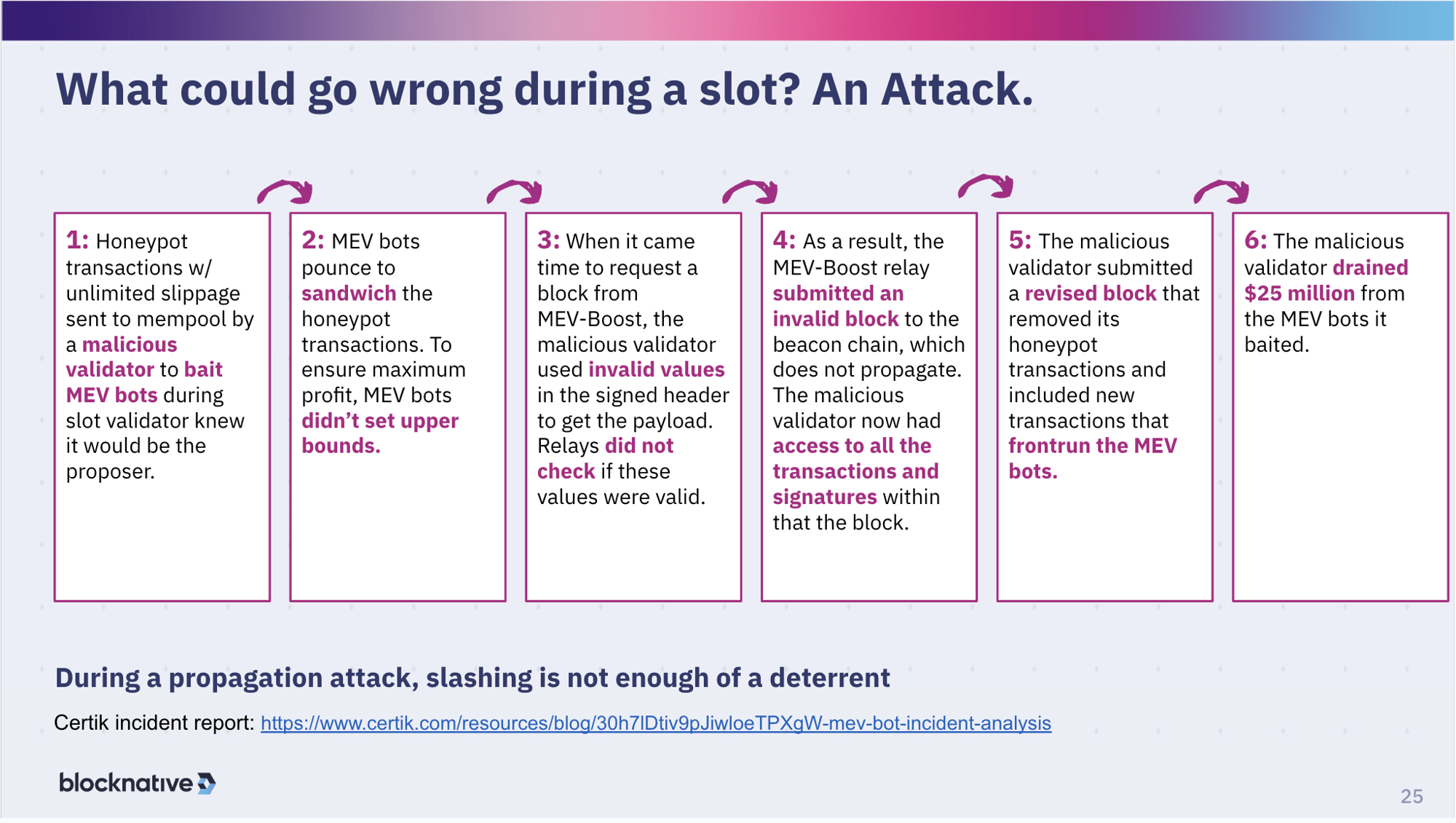

What could go wrong during a slot? An attack.

Other things can go wrong, and have. In April, we had a pretty interesting event where a validator saw an opportunity to basically fake out the system. They created honeypot transactions that were not very good trades on tokens, probably with relatively low liquidity pools and they basically didn't put any kind of boundary on the slippage for those transactions.

Bots came in and saw that and said, "oh, I can I can make $100 or €100 here, or I can make $1,000 or $10,000." They just ramped up and said, "Wow, there's no boundary and the slippage, so I'm going to basically go for everything I possibly could go for." These bots were about to make an absolute mint off of these transactions. So they bounced all over to sandwich the heck out of it, and were ready to get an absolutely fabulous return. They could close it up shop after this because they would be making so much money.

But they also didn't set any boundaries on how they were sandwiching these transactions. They were trying to get everything they could out of it because they thought someone was being a fool and they could take maximum advantage of that.

When it was time for the block to be proposed, this block with these these huge sandwich bot transactions in it - the searcher sent to builders, the builders included them, and the relays had them available. - the malicious validator set it up for when they knew they would be the proposer because validators know a few minutes ahead of time (within the 6.4 - minute epoch) if they're a proposer. So the malicious validator planned out carefully when to initiate these honeypot transactions to trigger the sequence.

The malicious validator signed the header that had this juicy, juicy block. But they did it a little bit incorrectly, in such a way the relays wouldn't check quite correctly. They could do this because relays are all open source software, which allowed the validator to identify a vulnerability. Because the bid was signed, the relays then delivered the block to that validator as they're supposed to. And they did that before actually propagating the block and therefore verifying that the signature was okay.

The block signature was not quite okay. It failed that check and didn't propagate.

Now you have a situation where this validator now knows what's inside that block and it's not propagating yet. Basically received an invalid block and rewrote it. They took out their little honeypot transactions, left the MEV-bot sandwich attacks, and essentially front ran those. Because these MEV attacks were also unbounded, as they were trying to get as much from the zero slippage honeypot transactions, the malicious validator was able to drain $25 million, or so from those bots. They then propagated that transaction. Because they evaded these MEV-bots and since there was no other competing block being propagated, that new, re-written block was the winning block. That block went on-chain and the validator walked away with a lot of money.

Needless to say, they were slashed for this inappropriate behavior, because technically, they violated the consensus rules by signing two distinct blocks - one invalid - and breaking the rules. But even if they were slashed the full 32 ETH, that's a low cost for getting $25 million in return.

So that's an example of something that can go wrong in a slot under an attack scenario. This attack scenario is no longer available, as the relays patched the vulnerability within about 12 hours. They also implemented whole number of other fixes to try to improve these kinds of timing attacks. But, but that is a scenario that that can happen.

What happens when you have these sorts of issues with slots? The typical things:

- A trader can have problems with settlement, which is bad for the trader.

- A validator is going to have problems with their payout; they will miss a slot, they're going to not get the payout that they're expecting. If you're an individual validator that could be pretty severe, because you only maybe get one opportunity every three months. So you definitely rely on not having a failure when it's your turn. For larger pools, they can average things out a bit.

- In the case of the builder, if the slot doesn't work the builder is going to lose the profits they would normally get off of building for that particular slot.

- And of course, relay operations are affected. From Blocknative's perspective, when these sort of missed slots or other activities happen where a slot is negatively impacted, we have a lot of operational activity we have to do to work with the validator to understand what happened. Was it a latency issue? Is this a configuration issue on the part of the validator? There are all kinds of reasons why it could happen. There's a human cost to service that.

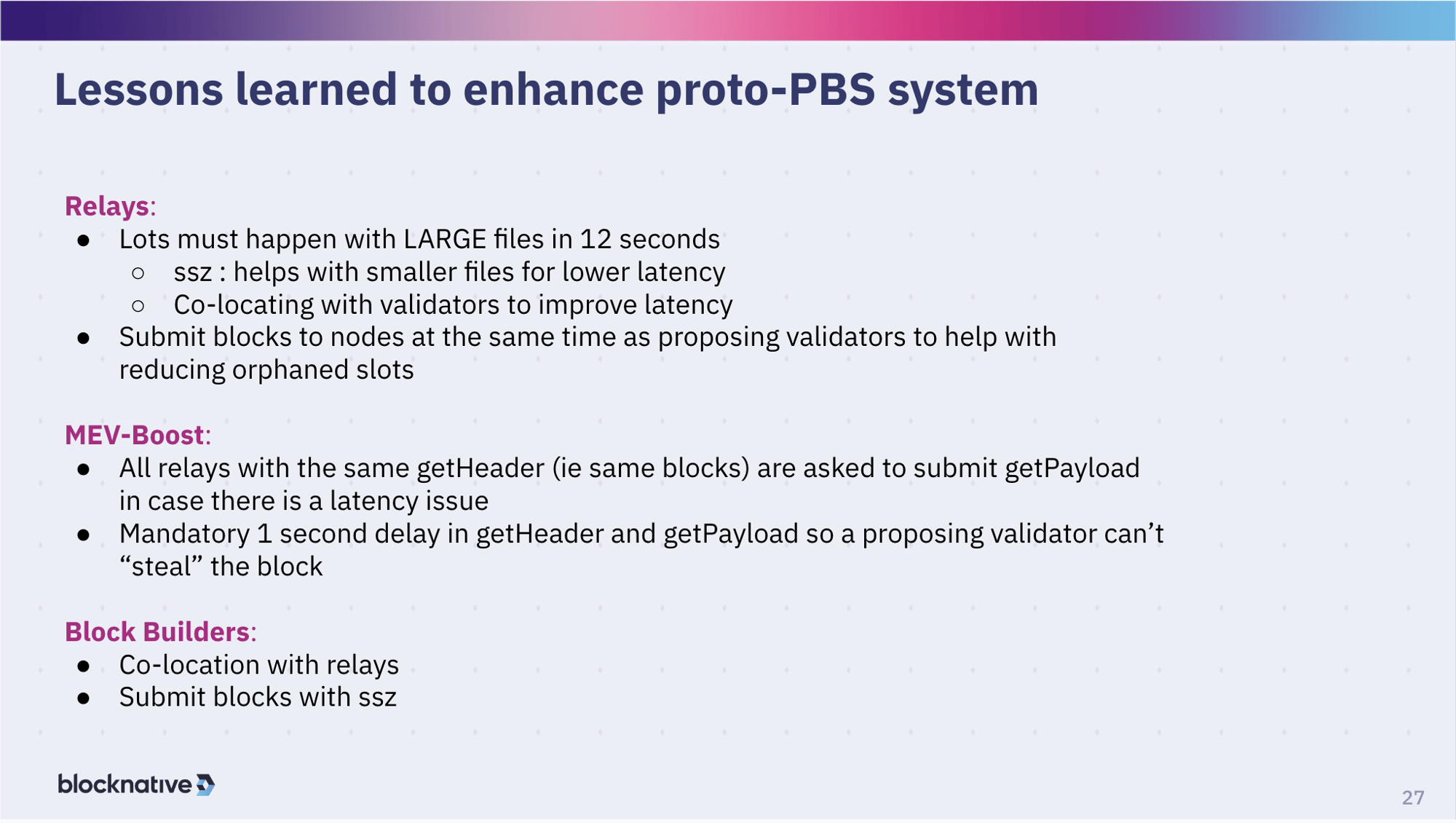

Lessons learned to enhance proto-PBS system

So what have we learned? What relays have learned is that this latency thing is absolutely crucial. So there's a lot of effort to continue to improve the latency game. We're using SSZ, which is a compression algorithm so that the data being moved around - blocks are really large - is faster. Validators are co-locating with relays, that's pretty common practice. Builders are co-locating with relays, that's even more common. And now, if the relay has access to the block that is being propagated, then they will propagate it as well to try to accelerate that to prevent any kind of timing games and reduce any kind of orphaning of slot.

And then MEV-Boost has been updated to further improve any kind of latency issues. Remember, that MEV boost has this interesting property where they have a function that calls to get the bid, but anybody can do that and that's an unsigned request. So relays are serving bid requests from a lot of people - from builders to validators and from everyone else. So there's a latency issue there because they're serving probably 1000s of getHeader requests per second. And then there's there's some discussion about delaying the getHeader and getPayload, so that it reduces the change of the validator from stealing a block from getting the getpayload and doing something with it, before the signing of that getHeader is really starting to propagate. And of course, as I mentioned, builders are co-locating and using compression.

There's still a ton of work to do on ePBS. ePBS - enshrined PBS - is where we don't necessarily need to use relays. But it's not clear how long that's going take. Probably a few years, if ever actually.

There's a lot of experimenting being done there. Builders having to stake so that they can't build blocks that can't be properly verified, all kinds of sort of proposals that are out there, some of which are getting experimented on relays. In fact, the relay community is our ePBS testbed.

In the future, there will single slot finality, which creates some interesting problems. I mentioned at the very beginning that you have these committees of 128 validators. If you need to do single slot finality, you need to have way more validators on that slot. This creates a whole new host of problems, including new latency issues. If you can get all validators to validate a single slot, then you can do single slot finality but that's a serious bandwidth issue.

On the execution layer, you have danksharding and proto-danksharding, which is going to change some of the dynamics, especially on the bandwidth side, as well. Builders getting it to relays, relays getting it to validators, that changes the latency game.

Finally, there's a lot of work being done by various entities in pre-confirmations. Since you know the proposer that is theoretically going to own the slot, then there may be some interesting pre-confirmation possibilities there.

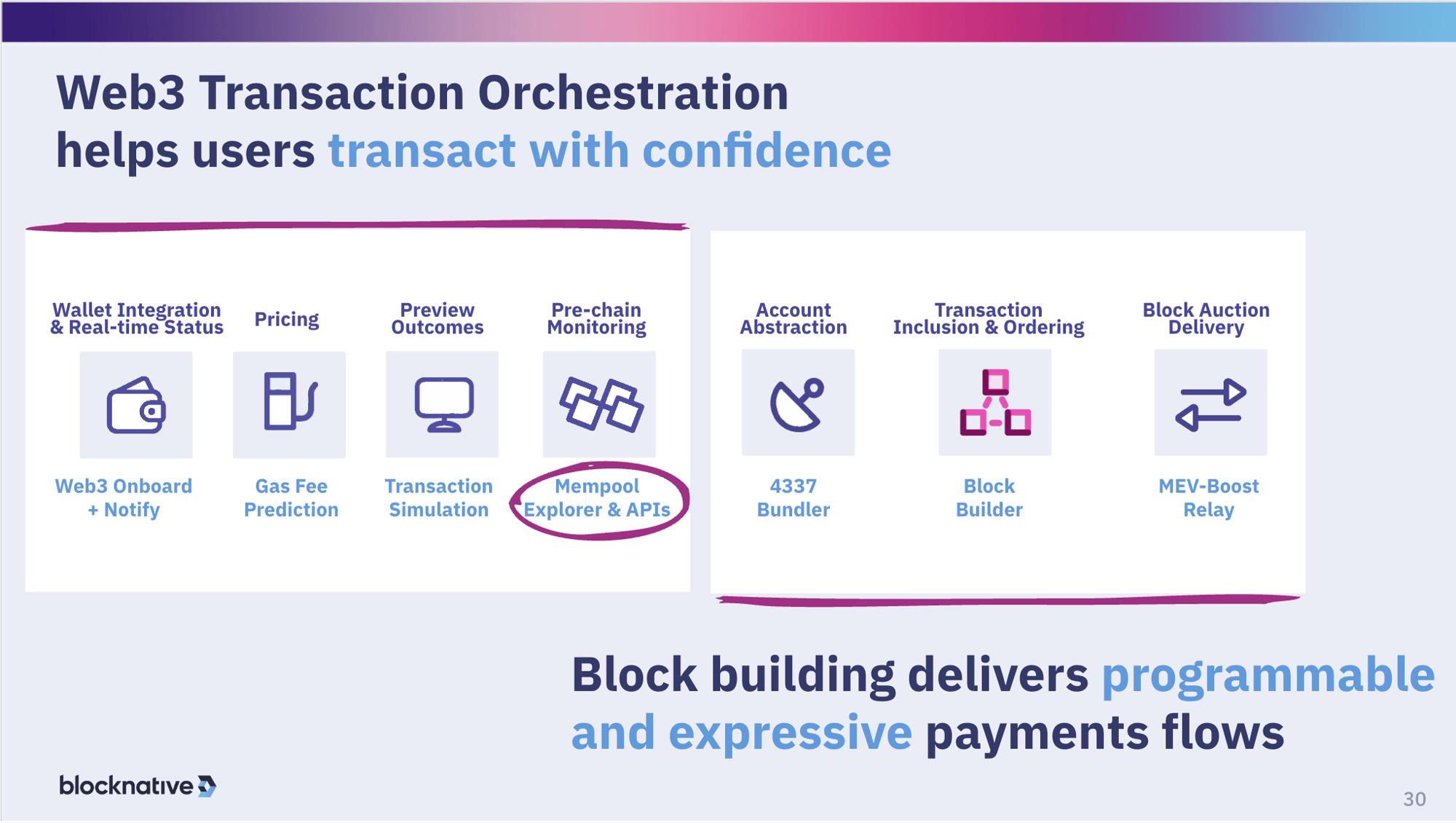

Blocknative is very involved in this as a builder and a relay. We also have numerous other products as a core infrastructure provider for Ethereum. We get involved in pretty much all of these base layer protocols, as well as more and more protocols on top of that, like 4337 and L2s.

Continue the conversation

That concludes our presentation. I'm happy to continue this conversation with anyone on our Discord. Here’s a link for that, if anybody wants to check it out. Otherwise, it's been a pleasure speaking to you today. Thank you very much.

Gas Extension

Blocknative's proven & powerful Gas API is available in a browser extension to help you quickly and accurately price transactions on 20+ chains.

Download the Extension